In the ever-evolving landscape of human-computer interaction, the recent MobileHCI 2023 conference served as the perfect stage for the XR2Learn project to showcase its immersive capabilities. The intersection of mobile technology and human-computer interaction provided a fitting backdrop for our project, offering attendees a glimpse into the future of interactive experiences. XR2Learn not only participated but demonstrated its achievements in the XR field, leaving an indelible mark on the conference and redefining expectations for the mobile HCI community.

The MobileHCI 2023 in Athens, Greece is a conference sponsored by ACM Special Interest Group on Human-Computer Interaction (SIGCHI), probably the largest international community with more than 9,000+ individuals attending the sponsored events and 2,800+ paid members. MobileHCI focuses on HCI aspects, techniques and approaches for all mobile and wearable computing devices and services. XR2Learn’s presence at the forum was a combination of demos on a dedicated XR2Learn booth together with presentations on recent project developments.

Vasilis Zafeiropoulos (HOU) described the V-Lab VR Educational Application Framework. V-Lab is an extension of Onlabs (HOU’s virtual biology laboratory), developed for the purposes of the XR2Learn project that offers the same functionality as Onlabs in addition to advanced graphics, an improved PC user interface as well as a brand new VR one, and a modular architecture that enables a development and customization workflow for different scenarios.

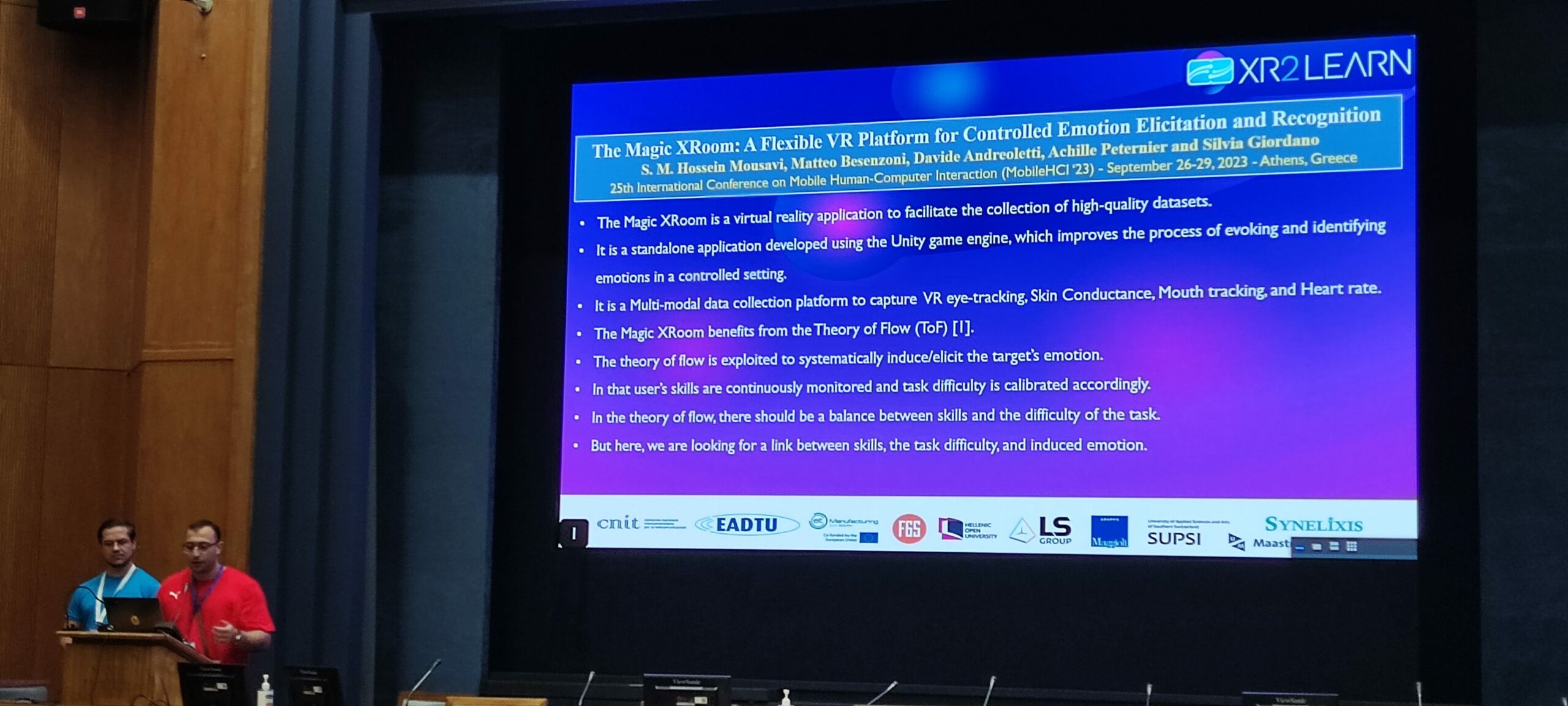

SUPSI team (Hossein Mousavi) described in detail their work on the Magic XRoom: A Flexible VR Platform for Controlled Emotion Elicitation and Recognition. The Magic Xroom is an innovative and immersive standalone VR application developed using the Unity game engine and the SteamVR framework. The purpose of creating the Magic Xroom is to improve the process of evoking and identifying emotions in a controlled setting. Essentially, the Magic Xroom provides a controlled and consistent environment for conducting experiments and collecting data. This enables researchers to methodically investigate different facets of emotional reactions in order to adjust the difficulty of VR training scenarii. As a result, it serves as a valuable resource for advancing research, creating more accurate emotion recognition algorithms, and refining emotional models. The subsequent sections outline the phases of emotion elicitation and emotional recognition in greater detail.

Antoine Lasnier from LS GROUP focused in his presentation on INTERACT: An authoring tool that facilitates the creation of human-centric interaction with 3D objects in virtual reality. INTERACT is a Unity plugin that allows users to create a human-centric interaction with 3D objects in virtual reality. It helps users with low technical knowledge to swiftly set up a 3D scene in UNITY, physicalize objects and gamify the scenario. The team demonstrated also the INTERACT tool on how to maintain a Trotec Speedy 400 laser cutting machine. The main features are:

- Embedded physics engine: This handles multi-body dynamics, collision detection, friction, and kinematics, providing realistic behavior for objects in a 3D environment..

- Natural object interaction: This feature allows users to directly manipulate 3D objects with their own hands, providing a more natural and intuitive way to interact with the virtual environment.

- Scenarization: This is a module designed for creating and editing complex assembly scenarios. INTERACT provides the Scenario Graph to create a hierarchy of steps that create an assembly sequence.